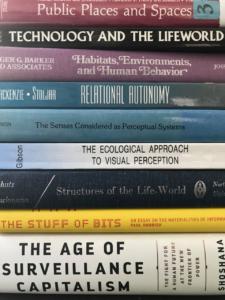

Classic phenomenologists and ecological psychologists have described and analyzed core existential categories of persons, things and environments. These core categories have been seen as essential to our perception and action choices, but also to ethics and values and questions of what it means to be human. Post-phenomenologists like Don Ihde have sought to extend these analyses to better understand our technologically meditated lives and how things also in some sense can be thought to have agency or at least participate in agentic structures. Thus, there has been some re-shuffling of how to see the divisions of the core categories of things and persons. But what about “life-worlds” and environments – what happens when the background takes on agentic properties, or when it becomes a tool to be controlled by others from afar? Peter-Paul Verbeek have called this relation an “immersion” in technology. But where does such an immersion leave our individual and social agency? This question is at the heart of a current project of mine, seeking to analyze surveillance driven algorithmic personalization, which I worked on during my months at the CFS this fall.

Classic phenomenologists and ecological psychologists have described and analyzed core existential categories of persons, things and environments. These core categories have been seen as essential to our perception and action choices, but also to ethics and values and questions of what it means to be human. Post-phenomenologists like Don Ihde have sought to extend these analyses to better understand our technologically meditated lives and how things also in some sense can be thought to have agency or at least participate in agentic structures. Thus, there has been some re-shuffling of how to see the divisions of the core categories of things and persons. But what about “life-worlds” and environments – what happens when the background takes on agentic properties, or when it becomes a tool to be controlled by others from afar? Peter-Paul Verbeek have called this relation an “immersion” in technology. But where does such an immersion leave our individual and social agency? This question is at the heart of a current project of mine, seeking to analyze surveillance driven algorithmic personalization, which I worked on during my months at the CFS this fall.

One aspect that I am particularly interested in is contrasting what we might call old fashioned analog and “dumb” spaces with new “smart” spaces. A smart space can either be entirely digitized interfaces, such as personalized tools and platforms online, or analog spaces transformed by connected and AI-powered IoTs. The key is that we are dealing with spaces and interfaces, which have sensors and effectors, and some kind of algorithmic decision making that can transform the space and the options of the agents behaving in them.

What minimally needs to be theorized is the notion that goal-directed sensor-effector loops, which we historically have only encountered in other agents, persons or animals, are now embedded in environmental layouts, which precisely do not present themselves to us as having agency.

To make apparent how radical a departure this is from ordinary conditions of agency, I shall take a detour around the basic concept of affordances, namely the idea that objects and other people are perceived as “affording” various kinds of actions. A standard example is that a cup affords drinking from, but as interface designers know very well, a digital button can also afford “clicking”. As Gibson famously put it “The affordances of the environment are what it offers the animal, what it provides or furnishes, either for good or ill” (Gibson 1979). Now given this concept we can think of all agency as taking place within some perceived field of affordances.

Ordinary object affordances are relatively stable and predictable and do not typically change in ways that dynamically depend on perceived actions and intentions of the agent. Rather they mainly depend on the “physics” of their own structure in concert with that of the agent and the surrounding environment. Objects are therefore relatively predictable – which is also why we can incorporate tools within our actions and even as cyborg extensions of our bodies. No need for coercion or salary raises when it comes to making objects predictable, we simply have to understand their constitution enough to trust and use their afforded effects.

Social affordances on the other hand are deeply dynamic and rely on complexly recurrent processes of mutual perception and information exchange between agents both within shared environments and across spatial distances. Other people and their social affordances are exciting in part due to their dynamism, but also potentially volatile. In the practice of navigating and managing actions within highly dynamic social spaces, we spend a great deal of energy reading, categorizing and playing towards the character traits and receptive means of other people. We try to establish emotional, empathetic and trusting social relations. But if another person appears too dangerous or risky – or simply too close for comfort – we typically distance ourselves from that person, both physically and informationally.

Now enter “smart” and “personalized” environments. Here we have “spaces” or layouts of affordances and navigation paths which appear “dumb,” but morph and change given our behavior in these and other spaces. The surveillance and personal data-driven nature of smart spaces is incredibly important to understand. Note that in ordinary spaces objects do not perceive or read us and therefore do not reciprocally transform themselves except as a consequence of physical interactions. Other people on the other hand precisely engage in the this potentially wonderful and potentially tricky recursive causality. We therefore use a lot of energy tracking others and their behavior, and with the help of this intel we choose which others to share our spaces with, or at least how we will behave in their presence.

As we design AI-driven dynamically transforming affordance spaces – we must raise the questions of whether we 1) blur the difference between objects and other agents, 2) whether other agents are given too much asymmetric power to create effects in our near spaces – yet stay out of sight. And lastly 3) how these dynamics are further exacerbated by smart spaces typically being other-owned, proprietary and mostly for profit.

In brief, I hope with this project to help us better understand the conditions of social affordances in dumb versus smart spaces. I suggest that in smart spaces there are radically new circumstances that totally undermine past safety procedures in regard to keeping other’s actions at a distance. Further current uses and abuses of these powers already suggest that smart spaces not only isolate us epistemically but also in many ways both hide other agents and undermine the empathetic relations and trustworthiness checks that we use to judge social affordances in “dumb” space. Thus, we can expect agents to suffer not only disorientation in regard to the parts of the world that are still shared, but also in certain ways actually fail to move – in the basic sense of moving away from certain others and past preferences.

My time at CFS flew by, and ironically much of it was on zoom as the second wave set it, so I hope to be back again – in analog space – at some point in the not-so-distant future. But this project is on-going so in the meantime please feel free to reach out if you are interested in similar issues.